Various forms of artificial intelligence (AI) look set to transform medicine and the delivery of healthcare services as more and more potential uses are recognized, while adoption rates for AI continue to climb.

Machine Learning (ML) has revolutionized clinical decision support over the past decade, as has AI enhancement of radiological images allowing the use of safer low-dose radiation scans. But AI no matter in which form, requires massive amounts of data for modelling, training, and for mining. While much of that data is de-identified, some cannot be, as training can sometimes require the aggregation of each patient's set of medical tests and records, thus the patient must be known to the AI, in order for it to learn and model correctly.

But healthcare data is valuable. Its valuable to hackers who can ransom it back to data custodians or sell that PII and PHI data on the darknet. Its valuable to nation states such as China for its own data modelling and AI training. Furthermore, medical data is highly regulated and so is subject to fines, punitive damages, restitution and corrective action plans when breached.

AI models are highly valuable and are now the modern day equivalent of the 1960s' 'race to the moon' between the US and USSR. Only the competitors today are the USA and the PRC. Consequently, China has been very aggressive in 'acquiring' whatever research it can to jump-start or enhance its own AI development programs. This has included insider theft by visiting professors and foreign students at western universities, and targeted cyberattacks from the outside. China's five year plan is to surpass the west in its AI capabilities - not just for medical applications but also for military-defence. So for both countries and others, AI is a strategic imperative. It is unknown if western governments have executed similar campaigns of cybertheft and cyberespionage against China's AI programs, though considered likely, but on a much smaller scale. The irony of cyberattacks to steal AI modelling data is however not lost on those in cybersecurity as will be explained shortly.

AI in Cybercrime

AI may become the future weapon of choice for cybercriminals. Its unique abilities to mutate as it learns about its environment and masquerade as a valid user and legitimate network traffic allows malware to go undetected across the network bypassing all of our existing cyber defensive tools. Even the best NIDS, AMP and XDR are rendered impotent by AI's stealthiness.

AI can be particularly adept when used in phishing attempts. AI understands context and can insert itself into existing email threads. By employing natural language processing to use similar language and writing style to users in a thread, it can trick other users into opening malware-laden attachments or to click on malicious links. Unless an organization has sandboxing in place for attachments and external websites then AI based phishing at its basic, will have a high margin of success. But things don't stop there.

Offensive AI has been used to weaponize existing malware Trojans. This includes the Emotet banking Trojan which was recently AI enabled. It can self-propagate to spread laterally across a network, and contains a password list to brute force its way into systems as it goes. Its highly extensible framework can be used for new modules for even more nefarious purposes including ransomware and other availability attacks. In healthcare, availability is everything. When health IT and IoT systems go down so does a provider's ability to render care to patients in today's highly digital health system.

Offensive AI can also be used to execute integrity based attacks against healthcare. This is where the danger really lies. AI blends into the background and uses APT techniques to learn the dominant communication channels seamlessly merging in with routine activity to disguise itself amid the noise. AI can change medical records, altering diagnoses, changing blood types, or removing patient allergies, all without raising alarm.

It's one thing for physicians not to have access to medical records, but to have access to medical records with altered data is another altogether. It's also far more dangerous if the wrong treatment is then prescribed based upon that bad data. This becomes a major clinical risk and patient safety issue. It also denudes trust in the HIT and HIoT systems that clinicians rely upon, leaning to physicians questioning the data in front of them or having to second guess that information.

- Can I trust a medical record?

- Can I trust a medical device?

It also raises some major questions around medical liability.

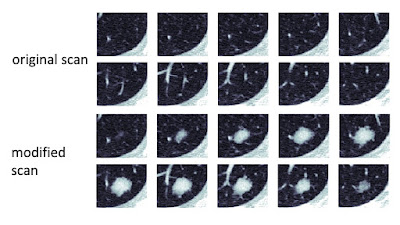

A research study in 2019 at Ben-Gurion University of the Negev was able to compromise the integrity of a radiological image by inserting fake nodules into an image between the CT scanner and the PACS systems or by removing real nodules from a CT image by using Deep Learning (DL). The research wasn't theoretical either, but used a blind study to prove its thesis that radiologists could be fooled by AI altered images.

The study was able to trick three skilled radiologists into misdiagnosing conditions nearly every time using real CT lung scans, 70 of which were altered by their malware. In the case of scans with fabricated cancerous nodules, the radiologists diagnosed cancer 99 percent of the time. In cases where the malware removed real cancerous nodules from scans, the radiologists said those patients were healthy 94 percent of the time.

The implication of such a powerful tool if used maliciously is obviously huge, resulting in cancers remaining undiagnosed or patients being needlessly misled and perhaps operated on.

In the run up to the 2016 presidential election Hillary Clinton decided to share a recent CT with the media to prove that she was suffering from pneumonia rather than long term health concerns such as cancer. Had her CT scan been altered its likely that she would have been forced to withdraw from the election. AI could have thus been used to influence or alter the outcome of a US presidential election. It thus could be a powerful tool for nefarious nation states to undermine democracy or for radical domestic groups wishing to destabilize a country or change the outcome of an election.

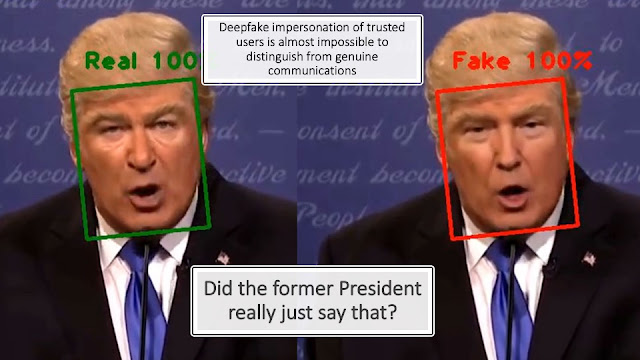

Deepfakes

The rising capabilities and use of Deepfakes for Business Email Compromise (BEC) whether using audio or video, will render humans unable to differentiate between true and false, real and fake, legitimate and illegitimate.

"Was that really the CEO I just had an interactive phone conversation with telling me to wire money overseas?"

But Deepfakes could be very dangerous from a national security perspective also, domestically and internationally. "Did the President really say that on TV?"

Compared to Ronald Reagan's 1984 hot mike gaffe about bombing Russia, a deepfake might be much more convincing as well as a lot more concerning as the majority of people would likely believe what they saw and heard. After all, much of the US population believe what they read on social media or on news sites that constantly fail fact checking. But the US population is not alone as we have seen in Russia where most of the population believes the state propaganda presented on TV about Putin's intervention in Ukraine to prevent Nazis.

Cognitively, we are not prepared for deepfakes and not preconditioned to critically evaluate what we see and hear in the same way that we may challenge a photo in a magazine that may have been photoshopped. AI obviously has massive and as yet untapped PhyOps (psychological operations) capabilities for the CIA, FSB, and others.

As these and other 'Offensive AI' tools develop and become more widespread, it is likely that cybersecurity practitioners will need to pivot towards greater use of 'Defensive AI' tools. Tools that can recognize an AI based attack and move quickly (far quicker than a human) to block such an attack. Indeed, it is likely that future AI-powered assaults will far outpace human response teams and that almost nano-second responses will be needed to prevent the almost pandemic spread of malware across the network.

According to Forrester's "Using AI for Evil' report, "Mainstream AI-powered hacking is just a matter of time."